Relying on market share to predict a technology’s death is a recipe for strategic failure; the real leading indicator is the silent exodus of its developer community.

- A technology’s vitality is directly tied to “developer gravity”—its ability to attract and retain talent. A decline in this area signals imminent obsolescence.

- Budgeting for migration isn’t a crisis, but a predictable operational cycle. The cost of inaction far outweighs the one-time cost of a planned transition.

Recommendation: Shift your focus from economic reports to socio-technical signals. Start monitoring developer ecosystem health and build a strategic fund for inevitable tech migrations.

For Chief Information Officers and strategic planners, navigating the technology landscape feels like charting a course through a perpetual storm. The pressure to invest in tools that provide a competitive edge is immense, yet the risk of backing a technology destined for the graveyard is equally high. The conventional wisdom is to track market share, follow industry analyst reports, and listen to sales pitches. But these are lagging indicators. By the time a technology’s market share plummets, it’s already too late; you’re saddled with a legacy system, mounting technical debt, and a costly migration crisis.

The conversation often revolves around shiny new objects and hype cycles, from AI-powered everything to the next blockchain revolution. We are told to be “data-driven,” yet we rely on data that reflects the past, not the future. This approach misses the most critical, predictive signals—the human element. What if the true barometer of a technology’s future isn’t its current install base, but the passion, engagement, and belief of the builders who sustain it?

This analysis takes a different approach. We argue that a technology doesn’t die when the last customer leaves; it begins its death spiral the moment developers lose faith. The most accurate forecast comes not from financial reports, but from the subtle, often-overlooked socio-technical signals within a technology’s ecosystem. By learning to read these signs, you can move from reactive crisis management to proactive, visionary planning. We will explore the leading indicators of tech obsolescence, from the first whispers of developer discontent to the financial traps of vendor lock-in, providing a framework to ensure your investments today are still assets in three years, not liabilities.

This article provides a comprehensive framework for identifying these predictive signals. By understanding the true drivers of technological longevity and decay, you can build a more resilient and forward-looking technology strategy. The following sections break down the critical areas you need to monitor.

Summary: A Futurist’s Guide to Tech Obsolescence

- Why Declining Developer Activity Is the First Sign of Dead Tech?

- How to Budget for the Inevitable Migration Away from Legacy SaaS?

- Hype Cycle vs Real Value: How to Spot a Bubble Before You Buy?

- The Acquisition Trap: What Happens When Your Vendor Gets Bought and Killed?

- When to Adopt New Tech: The “Early Adopter” Tax vs The “Laggard” Cost

- MEAN vs MERN Stack: Which JavaScript Framework Attracts Better Talent?

- The Vendor Lock-In Trap: How to Avoid Being Held Hostage by Your Provider

- SaaS Software vs Custom Build: Which Is Best for a $50k Budget?

Why Declining Developer Activity Is the First Sign of Dead Tech?

Market share is a vanity metric; developer gravity is sanity. A technology’s true vitality isn’t measured by its number of users, but by its ability to attract and retain the talent that builds, maintains, and innovates upon it. When this “developer gravity” weakens, a technology enters a terminal decline, often years before it’s reflected in sales figures. This is because a shrinking developer pool leads to a cascade of failures: slower bug fixes, a stagnant feature roadmap, poor documentation, and a collapsing third-party ecosystem. For a strategist, monitoring this is the equivalent of seeing the storm clouds gather long before the rain begins.

The crypto space provides a stark, recent example. While headlines focused on fluctuating coin prices, the real story was happening on GitHub. A 75% drop in weekly crypto commits from its peak signaled a massive talent exodus towards more promising fields like AI. This developer exodus wasn’t uniform; platforms like Ethereum and Solana saw active developers drop by 34-40%, while others experienced even steeper declines. This illustrates how quickly developer sentiment can shift, leaving once-hot platforms on life support. A CIO who only watched market caps would have missed the real story: the platform’s ability to evolve was evaporating.

Assessing this ecosystem vitality requires moving beyond simple commit counts. It’s about measuring the health of the community. Are questions on Stack Overflow being answered? Are pull requests being reviewed in a timely manner? Is the ratio of resolved to unresolved issues improving? A decline in these metrics is a critical red flag that the core community is disengaging. This is the first and most reliable signal that a technology is on the path to obsolescence.

Action Plan: Your Ecosystem Vitality Health Check

- Points of contact: Monitor GitHub activity (commits, issues), Stack Overflow question trends, and community forum/Discord engagement.

- Collecte: Inventory key metrics weekly—ratio of resolved to unresolved issues, average time to close critical bug reports, and pull request review rates.

- Coherence: Confront this data with the vendor’s public roadmap. Do their promises align with the community’s visible output?

- Mémorabilité/émotion: Gauge sentiment in developer forums. Is the tone one of excitement and problem-solving, or frustration and abandonment?

- Plan d’intégration: If red flags appear, immediately begin researching alternatives and allocate a pilot project to test a potential replacement technology.

How to Budget for the Inevitable Migration Away from Legacy SaaS?

Most organizations treat technology migration as an unforeseen crisis, a fire to be extinguished with an emergency budget. A forward-thinking strategist, however, views it as a predictable and manageable part of the technology lifecycle. Obsolescence is not a matter of *if*, but *when*. The key is to transform migration from a reactive expense into a proactive, strategic investment. This begins with a clear-eyed assessment of the alternative: the staggering cost of inaction.

Staying with a legacy SaaS platform incurs significant hidden costs that compound over time. These include lost productivity from staff wrestling with inefficient workflows, missed revenue opportunities due to a lack of modern features, and increasing security risks from unsupported software. For large companies, this isn’t a trivial amount; analysis shows the cost of SaaS inaction can range from $21M to $127M annually in wasted spend. By quantifying these “inaction costs,” you can build a powerful business case for a planned migration, showing that the one-time investment is far less than the slow, continuous bleed of sticking with the status quo.

Strategic budgeting for obsolescence involves creating a dedicated “technology renewal fund.” This isn’t a slush fund, but a calculated allocation based on the average lifespan of your critical software (typically 5-7 years). By setting aside a fraction of the budget each year, you ensure that when a core platform shows signs of decay, the resources for a smooth transition are already in place. This approach de-risks the entire process, allowing for proper planning, data cleansing, and user training, rather than a rushed, chaotic implementation. The following table illustrates how the long-term cost of doing nothing often dwarfs the upfront cost of moving forward.

| Cost Category | Annual Inaction Cost | One-Time Migration Cost |

|---|---|---|

| Staff Inefficiency | $50K-$75K | Training: $15K |

| Missed Revenue | $30K-$50K | Temp Productivity Loss: $20K |

| Security/Compliance Risk | $25K-$40K | Data Migration: $25K |

| Member Attrition | $20K-$35K | Implementation: $40K |

| Total | $125K-$200K/year | $100K one-time |

Hype Cycle vs Real Value: How to Spot a Bubble Before You Buy?

In the tech world, hype is a currency. New technologies are often launched on a wave of venture capital funding, slick marketing, and grand promises that can be difficult to distinguish from genuine innovation. For a CIO, falling for a “hype bubble” means investing in a tool that solves a non-existent problem or one whose underlying technology is unsustainable. The challenge is to develop a framework for separating a technology with a solid foundation from one floating on hot air. This requires looking past the marketing and scrutinizing the substance.

One of the clearest indicators of hype is a focus on vague benefits rather than quantified results. A vendor that showcases a gallery of impressive client logos but can’t provide case studies with concrete, metric-driven ROI is a major red flag. Similarly, analyze their documentation: is it a collection of glossy “Getting Started” guides, or does it include deep, technical “Troubleshooting at Scale” sections? The former is built for acquisition, the latter for retention and real-world use. This distinction is crucial for understanding if a product is designed for a quick flip or for long-term enterprise value.

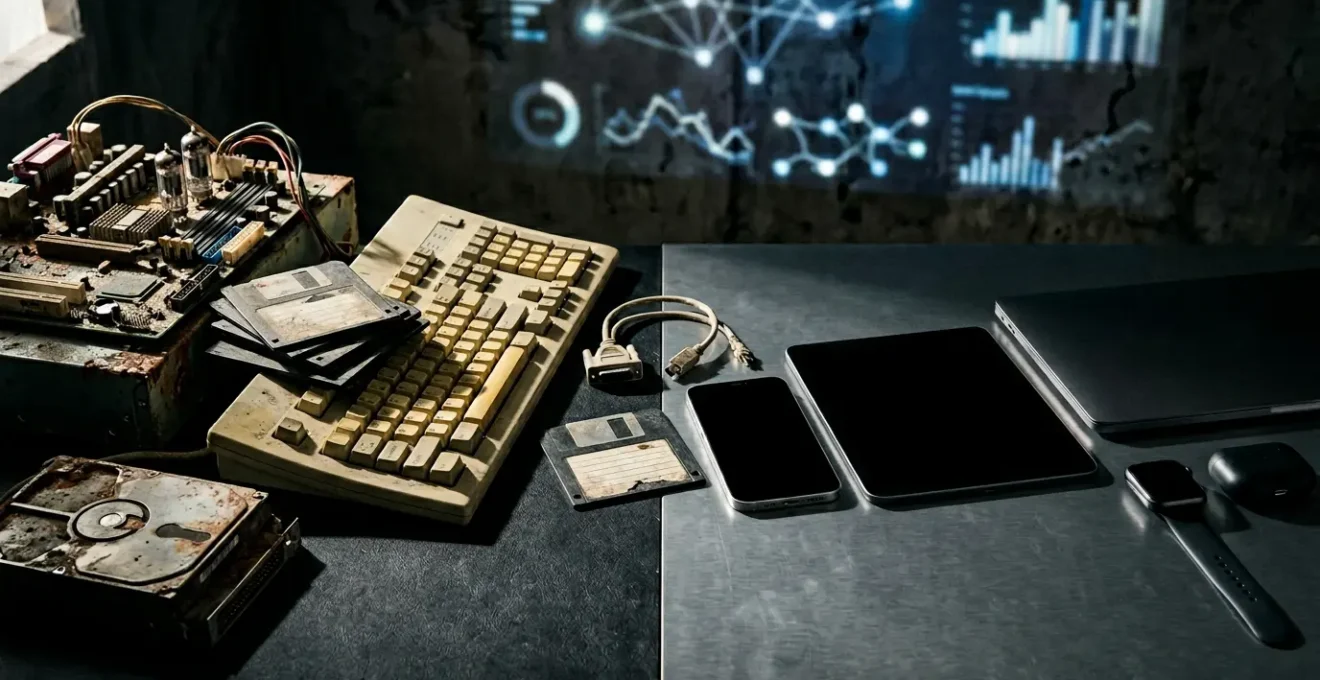

As the image suggests, the difference between hype and reality is one of substance. Bubbles are translucent and weightless, while real value is solid and measurable. Visionary thinkers are already predicting a market correction against unsubstantiated claims. As Max Willens of EMARKETER noted, the future may hold a significant backlash against over-hyped trends.

A company will publicly repudiate generative AI as a technology, thereby setting off a big conversation in tech and media about its efficacy and usefulness

– Max Willens, EMARKETER Behind the Numbers Podcast

This prediction highlights the need for critical evaluation. Before investing, ask the most fundamental question: can the vendor clearly articulate the specific problem their technology solves, and is that a problem your organization actually has? If the answer is muddled or relies on buzzwords, you are likely looking at a bubble, not a breakthrough.

The Acquisition Trap: What Happens When Your Vendor Gets Bought and Killed?

One of the most abrupt paths to obsolescence is the “acquisition trap.” Your organization depends on a critical SaaS tool from a nimble, innovative vendor. Suddenly, that vendor is acquired by a tech giant. The initial press release is full of optimistic synergy-speak, but soon, the signs of a “kill” acquisition emerge. The product roadmap goes silent, key founders and engineers depart, support quality plummets, and eventually, the dreaded “end-of-life” announcement arrives, forcing you into a costly and disruptive migration on the acquirer’s timeline, not yours.

This isn’t a rare occurrence; it’s a common strategic play. A large company may acquire a smaller competitor not for its technology, but to eliminate it and migrate its customer base to their own, often inferior, platform. We can even see this pattern at a macro level, where entire technologies are “killed” by platform owners. The death of the third-party cookie in 2024 is a prime example. After years of privacy concerns, major browsers like Google Chrome finally disabled them. This single decision by a few powerful platform owners rendered an entire ecosystem of ad-tech tools obsolete overnight, forcing a seismic shift in digital marketing strategies. It was a win for consumer privacy, but a brutal lesson for any company whose business model was built entirely on a technology they didn’t control.

To avoid this trap, due diligence must extend beyond the product to the vendor’s own viability and strategic position. Is the vendor heavily reliant on venture capital, making it a prime acquisition target? Or does it have a sustainable business model? Proactive legal and contractual safeguards are your best defense. Negotiating a “termination for convenience” clause allows you to exit the contract without penalty if the service degrades post-acquisition. For truly mission-critical software, an even stronger protection is a source code escrow agreement. This ensures that if the vendor goes bust or sunsets the product, you receive access to the source code, giving you a lifeline to maintain the system or migrate on your own terms.

When to Adopt New Tech: The “Early Adopter” Tax vs The “Laggard” Cost

Every new technology presents a strategic dilemma: adopt too early and you pay the “early adopter tax,” but wait too long and you’re hit with the “laggard cost.” Neither position is inherently right or wrong; the key is to make a conscious, strategic choice based on the technology’s maturity and its potential impact on your core business. Visionary planning involves calculating and weighing these two opposing costs.

The early adopter tax is paid in the form of bugs, missing features, a lack of community support, and the high cost of specialized expertise. You’re essentially funding the end of the vendor’s R&D cycle. This path is only justifiable for technologies that offer a direct, defensible competitive advantage that is central to your business model. For everything else, being on the bleeding edge is an expensive and unnecessary risk.

Conversely, the laggard cost is the price of inaction. It manifests as a slow erosion of efficiency, competitiveness, and talent retention. As a new technology matures and becomes the industry standard, the productivity gap between adopters and non-adopters widens. The rise of AI is a perfect case study. Analysis already predicts that by 2027, companies that are late to adopt AI will face a 5-15% productivity tax compared to their more agile competitors. This “tax” isn’t just financial; it also means being unable to attract top talent, who will gravitate toward companies using modern tools. The history of CRM adoption illustrates this perfectly; what was once a bleeding-edge tool for innovators in the early 2000s became a standard expectation for nearly every business by 2024, with over 750 vendors competing in a mature market.

The wisest strategy is often to be a “fast follower.” Let the innovators and early adopters absorb the initial risks and costs. Monitor the technology’s adoption curve, ecosystem vitality, and emerging best practices. The ideal time to invest is typically in the “early majority” phase, when the technology has been de-risked but still offers a significant competitive edge before it becomes a commoditized utility.

MEAN vs MERN Stack: Which JavaScript Framework Attracts Better Talent?

The choice of a technology stack is not just a technical decision; it’s a human capital strategy. The frameworks you choose directly influence the type, quality, and availability of talent you can attract. This is a perfect micro-example of “developer gravity” in action. For a CIO, understanding the subtle cultural and demographic differences between two seemingly similar ecosystems, like the MEAN (MongoDB, Express, Angular, Node.js) and MERN (MongoDB, Express, React, Node.js) stacks, is critical for long-term project success and talent retention.

While both are powerful JavaScript-based stacks, they attract developers with different mindsets and career trajectories. Angular (from MEAN) is known for its structured, opinionated, and comprehensive framework, often favored in large enterprise environments that prioritize stability and long-term maintainability. React (from MERN), on the other hand, is a more flexible, library-focused framework that excels at rapid prototyping and is immensely popular in the startup world. This difference in philosophy creates distinct talent pools.

Analysis of developer trends reveals these differences in stark detail. The MERN stack, powered by React’s popularity, has shown significantly more momentum and is a stronger magnet for emerging talent. This isn’t just about preference; it’s about a broader shift in the programming landscape. As one analysis of the GitHub 2025 Octoverse Report highlights, “TypeScript overtook Python and JavaScript as the most used programming language,” a trend that favors the strong TypeScript integration in modern Angular but is also widely adopted in React projects. The table below breaks down the talent implications:

| Factor | MEAN Stack (Angular) | MERN Stack (React) |

|---|---|---|

| Developer Mindset | Enterprise-focused, structured | Startup-oriented, rapid prototyping |

| GitHub Activity 2025 | Moderate growth | 25% YoY commit increase |

| Average Salary Range | $110K-$140K | $105K-$135K |

| Talent Availability | More senior developers | Larger junior pool |

| Geographic Concentration | Enterprise hubs | Tech startup cities |

The data is clear: if your goal is to tap into a large, dynamic, and growing pool of developers for a fast-moving project, the MERN stack currently has stronger developer gravity. If your priority is enterprise-grade structure and attracting experienced developers from corporate backgrounds, MEAN remains a solid, defensible choice. The “better” stack depends entirely on your strategic goals for talent acquisition.

The Vendor Lock-In Trap: How to Avoid Being Held Hostage by Your Provider

Vendor lock-in is one of the most insidious strategic risks in a modern tech stack. It occurs when the cost and complexity of switching from one vendor to another become so prohibitive that you are effectively held hostage. This allows the vendor to increase prices, degrade service, and ignore your feature requests with impunity, knowing that your escape routes are cut off. The ultimate expression of this power is the forced migration, where a vendor sunsets a product and compels you to move to their new, often more expensive, platform. This is not a hypothetical risk; predictions suggest a significant financial impact for those caught in this trap.

Strategists must recognize that lock-in isn’t a single event but a multi-layered problem. It begins with Data Lock-in, where your critical information is stored in proprietary formats that are difficult to export. It progresses to Platform Lock-in, where your application becomes deeply intertwined with a specific cloud provider’s managed services (e.g., AWS Lambda, Azure Cosmos DB). Then comes Skillset Lock-in, where your team’s expertise becomes so specialized in one vendor’s toolset that they are unable to work with alternatives. Finally, there’s Financial Lock-in, created by complex, long-term contracts with severe penalties for early termination. These layers combine to create a powerful cage.

The financial consequences are severe. When a vendor knows you are locked in, they can exploit that position during contract renewals or forced upgrades. According to Gartner, by 2026, organizations that fail to build a strategy to mitigate lock-in could see a 35% increase in software costs from forced migrations. Avoiding this trap requires a proactive strategy from day one. Prioritize vendors that offer open standards and robust, well-documented APIs for data export. Favor tools with strong open-source alternatives. During contract negotiations, fight for data ownership clauses and reasonable termination conditions. Building an “exit ramp” before you even get on the highway is the only way to maintain strategic control.

Key Takeaways

- Predicting obsolescence requires shifting focus from lagging economic indicators (market share) to leading socio-technical signals (developer ecosystem health).

- Migration is not a crisis but a predictable operational expense. Budgeting for it proactively is more cost-effective than absorbing the hidden costs of inaction.

- Strategic autonomy is your greatest asset. Mitigate vendor lock-in and acquisition traps through careful due diligence and contractual safeguards before you sign.

SaaS Software vs Custom Build: Which Is Best for a $50k Budget?

The perennial “build vs. buy” debate is the ultimate test of a technology strategist’s foresight. With a constrained budget of $50,000, the decision is even more critical. The common perception is that SaaS is the cheaper, faster option, while a custom build offers more control at a higher price. However, a truly strategic analysis goes beyond the initial investment to consider the 3-year Total Cost of Ownership (TCO) and, most importantly, control over your core competitive differentiator.

A straight TCO comparison often favors SaaS in the short term. The upfront cost is lower, and you get a mature, feature-rich product immediately. However, hidden costs like data migration, mandatory upgrades, and subscription escalations can accumulate. A custom build has a higher initial investment but may have lower long-term costs, primarily for maintenance and hosting. The table below illustrates a typical 3-year TCO scenario, showing how the gap can be narrower than expected. While SaaS appears cheaper, the real cost is often a near-total loss of control over the features that make your business unique.

| Cost Component | SaaS Solution | Custom Build |

|---|---|---|

| Initial Investment | $15K/year subscription | $50K development |

| Year 2-3 Costs | $30K ($15K x 2) | $20K maintenance/hosting |

| Hidden Costs | $10K data migration, upgrades | $15K evolution/scaling |

| 3-Year TCO | $55K | $85K |

| Core Differentiator Control | 20% (inflexible) | 100% (fully customizable) |

The most advanced organizations are moving beyond this binary choice and adopting a hybrid model. The “SaaS-Core, Custom-Edge” strategy provides the best of both worlds. It involves using best-in-class SaaS solutions for commodity functions that are not core to your business—things like authentication, billing, or basic CRM. This leverages the stability and cost-effectiveness of mature platforms. The bulk of the budget, however, is invested in custom-building the “edge”—the unique features and workflows that deliver your specific value proposition and create a competitive moat. This custom component then integrates with the SaaS core via APIs.

Ultimately, making the right call between SaaS, custom build, or a hybrid model requires applying all the principles of technology foresight. It demands an assessment of TCO, a clear understanding of what is truly core to your business, and a deep respect for maintaining strategic control. To apply this forward-looking framework to your own technology challenges, start by evaluating your current software portfolio through the lens of ecosystem vitality and vendor risk.